机构名称:

¥ 4.0

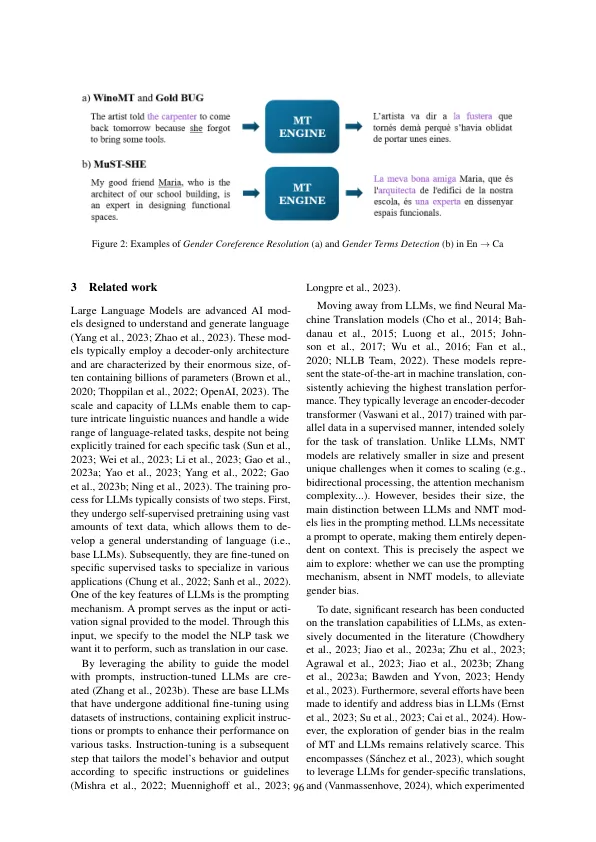

在机器翻译的域内,性别偏差被定义为MT系统产生翻译的趋势,这些翻译反映或永久化了基于文化和社会偏见的刻板印象,不平等或假设(Friedman和Nis-Senbaum,1996; Savoldi等,20221)。Given that the presence of such bias can lead to harmful con- sequences for certain groups — either in repre- sentational (i.e., misrepresentation or underrepre- sentation of social groups and their identities) or allocational harms (i.e., allocation or withholding of opportunities or resources to certain groups) — (Levesque, 2011; Crawford, 2017; Lal Zimman and Meyerhoff, 2017; Régner et Al。,2019年),这对于彻底调查和减轻其发生至关重要。尽管如此,解决性别偏见是一项多方面的任务。性别偏见是所有生成NLP模型中普遍存在的问题,而LLM也不例外

用LLMS评估和减轻MT中的性别偏见